Create a 3 Node Ceph Storage Cluster

Category : How-to

Ceph is an open source storage platform which is designed for modern storage needs. Ceph is scalable to the exabyte level and designed to have no single points of failure making it ideal for applications which require highly available flexible storage.

Ceph is an open source storage platform which is designed for modern storage needs. Ceph is scalable to the exabyte level and designed to have no single points of failure making it ideal for applications which require highly available flexible storage.

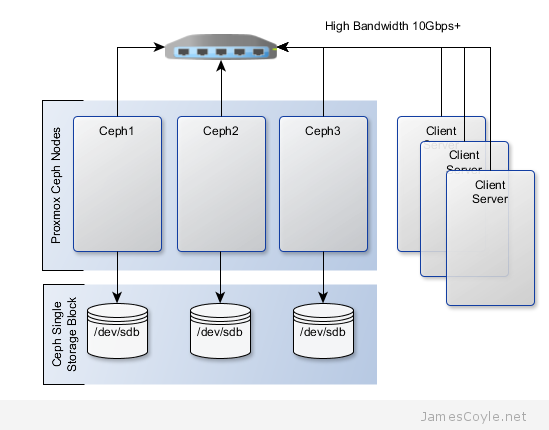

The below diagram shows the layout of an example 3 node cluster with Ceph storage. Two network interfaces can be used to increase bandwidth and redundancy. This can help to maintain sufficient bandwidth for storage requirements without affecting client applications.

This example will create a 3 node Ceph cluster with no single point of failure to provide highly redundant storage. I will refer to three host names which are all resolvable via my LAN DNS server; ceph1, ceph2 and ceph3 which are all on the jamescoyle.net domain. Each of these nodes has two disks configured; one which runs the Linux OS and one which is going to be used for the Ceph storage. The below output shows the storage available, which is exactly the same on each host. /dev/vda is the root partition containing the OS install and /dev/vdb is an untouched partition which will be used for Ceph.

root@ceph1:~# fdisk -l | grep /dev/vd Disk /dev/vdb doesn't contain a valid partition table Disk /dev/mapper/pve-root doesn't contain a valid partition table Disk /dev/mapper/pve-swap doesn't contain a valid partition table Disk /dev/mapper/pve-data doesn't contain a valid partition table Disk /dev/vda: 21.5 GB, 21474836480 bytes /dev/vda1 * 2048 1048575 523264 83 Linux /dev/vda2 1048576 41943039 20447232 8e Linux LVM Disk /dev/vdb: 107.4 GB, 107374182400 bytes

Before getting started with setting up the Ceph cluster, you need to do some preparation work. Make sure the following prerequisites are met before continuing the tutorial.

- SSH Keys are set up between all nodes in your cluster – see this post for information on how to set up SSH Keys.

- NTP is set up on all nodes in your cluster to keep the time in sync. You can install it with: apt-get install ntp

You will need to perform the following steps on all nodes in your Ceph cluster. First you will add the Ceph repositories and download the key to make the latest Ceph packages available. Add the following line to a new /etc/apt/sources.list.d/ file.

vi /etc/apt/sources.list.d/ceph.list

Add the below entry, save and close the file.

deb http://ceph.com/debian-dumpling/ wheezy main

Download the key from Ceph’s git page and install it.

wget -q -O- 'https://ceph.com/git/?p=ceph.git;a=blob_plain;f=keys/release.asc' | apt-key add -

Update all local repository cache.

sudo apt-get update

Note: if you see the below code when running apt-get update then the above wget command has failed – it could be because the Ceph git URL has changed.

W: GPG error: http://ceph.com wheezy Release: The following signatures couldn't be verified because the public key is not available: NO_PUBKEY 7EBFDD5D17ED316D

Run the following commands on just one of your Ceph nodes. I’ll use ceph1 for this example. Update your local package cache and install the ceph-deploy command.

apt-get install ceph-deploy -y

Create the first Ceph storage cluster and specify the first server in your cluster by either hostname or IP address for [SERVER].

ceph-deploy new [SERVER]

For example

ceph-deploy new ceph1.jamescoyle.net

Now deploy Ceph onto all nodes which will be used for the Ceph storage. Replace the below [SERVER] tags with the host name or IP address of your Ceph cluster including the host you are running the command on. See this post if you get a key error here.

ceph-deploy install [SERVER1] [SERVER2] [SERVER3] [SERVER...]

For example

ceph-deploy install ceph1.jamescoyle.net ceph2.jamescoyle.net ceph3.jamescoyle.net

Install the Ceph monitor and accept the key warning as keys are generated. So that you don’t have a single point of failure, you will need at least 3 monitors. You must also have an uneven number of monitors – 3, 5, 7, etc. Again, you will need to replace the [SERVER] tags with your server names or IP addresses.

ceph-deploy mon create [SERVER1] [SERVER2] [SERVER3] [SERVER...]

Example

ceph-deploy mon create ceph1.jamescoyle.net ceph2.jamescoyle.net ceph3.jamescoyle.net

Now gather the keys of the deployed installation, just on your primary server.

ceph-deploy gatherkeys [SERVER1]

Example

ceph-deploy gatherkeys ceph1.jamescoyle.net

It’s now time to start adding storage to the Ceph cluster. The fdisk output at the top of this page shows that the disk I’m going to use for Ceph is /dev/vdb, which is the same for all the nodes in my cluster. Using Ceph terminology, we will create an OSD based on each disk in the cluster. We could have used a file system location instead of a whole disk but, for this example, we will use a whole disk. Use the below command, changing [SERVER] to the name of the Ceph server which houses the disk and [DISK] to the disk representation in /dev/.

ceph-deploy osd --zap-disk create [SERVER]:[DISK]

For example

ceph-deploy osd --zap-disk create ceph1.jamescoyle.net:vdb

If the command fails, it’s likely because you have partitions on your disk. Run the fdisk command on the disk and press d to delete the partitions and w to save the changes. For example:

fdisk /dev/vdb

Run the osd command for all nodes in your Ceph cluster

ceph-deploy osd --zap-disk create ceph2.jamescoyle.net:vdb ceph-deploy osd --zap-disk create ceph3.jamescoyle.net:vdb

We now have to calculate the number of placement groups (PG) for our storage pool. A storage pool is a collection of OSDs, 3 in our case, which should each contain around 100 placement groups. Each placement group will hold your client data and map it to an OSD whilst providing redundancy to protect against disk failure.

To calculate your placement group count, multiply the amount of OSDs you have by 100 and divide it by the number of number of times each part of data is stored. The default is to store each part of data twice which means that if a disk fails, you won’t loose the data because it’s stored twice.

For our example,

3 OSDs * 100 = 300

Divided by 2 replicas, 300 / 2 = 150

Now lets create the storage pool! Use the below command and substitute [NAME] with the name to give this storage pool and [PG] with the number we just calculated.

ceph osd pool create [NAME] [PG]

For example

ceph osd pool create datastore 150 pool 'datastore' created

You have now completed the set up for the Ceph storage pool. See my blog post on mounting Ceph storage on Proxmox.

13 Comments

Mario Kothe

31-Mar-2014 at 6:51 pmwget -q -O- ‘https://ceph.com/git/?p=ceph.git;a=blob_plain;f=keys/release.asc’ | apt-key add –

This command does not work on a debian wheezy. Logged in as root.

The error reported is

gpg: no valid OpenPGP data found.

james.coyle

31-Mar-2014 at 8:12 pmHi Mario,

It looks as though you have copied the text incorrectly. You are using ‘ in your wget command when you should be using ‘. Please try again with the correct quotes.

Mario Kothe

31-Mar-2014 at 8:47 pmRight. That was a copy paster error.

Having another problem now. I can not deploy the montior. Evertime I try

ceph-deploy –cluster domino mon create-initial domino-001

I get the following error. Using just create does not solve it either.

[domino-001][INFO ] Running command: sudo /usr/sbin/service ceph -c /etc/ceph/domino.conf start mon.domino-001

[domino-001][INFO ] Running command: sudo ceph –cluster=domino –admin-daemon /var/run/ceph/domino-mon.domino-001.asok mon_status

[domino-001][ERROR ] admin_socket: exception getting command descriptions: [Errno 2] No such file or directory

Mario Kothe

31-Mar-2014 at 8:49 pmforget. may be the current complete config helps

[global]

fsid = xxxx [replaced]

mon_initial_members = domino-001

mon_host = 192.168.22.10

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

filestore_xattr_use_omap = true

public network = 192.168.25.0/255.255.255.0

cluster network = 192.168.22.0/255.255.0

Mario Kothe

31-Mar-2014 at 9:42 pmIs it possible that in the new release 0.72 is a bug. If I try the following:

ceph-deploy –cluster domino mon destroy domino-001.ceph.heliopolis.net

I get, what looks like seg faults:

domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:18.115832 7f352fd56700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x16592a0 sd=3 :0 s=1 pgs=0 cs=0 l=1 c=0x1659500).fault

[domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:21.116229 7f352fc55700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x1657db0 sd=4 :0 s=1 pgs=0 cs=0 l=1 c=0x1658010).fault

[domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:24.116824 7f352fd56700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x165b4a0 sd=4 :0 s=1 pgs=0 cs=0 l=1 c=0x165b700).fault

[domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:27.117904 7f352fc55700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x16569b0 sd=4 :0 s=1 pgs=0 cs=0 l=1 c=0x1656c10).fault

[domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:30.119369 7f352fd56700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x7f3528000c90 sd=4 :0 s=1 pgs=0 cs=0 l=1 c=0x7f3528000ef0).fault

[domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:33.120081 7f352fc55700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x7f3528003010 sd=4 :0 s=1 pgs=0 cs=0 l=1 c=0x7f3528001dc0).fault

[domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:36.120654 7f352fd56700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x7f3528003620 sd=4 :0 s=1 pgs=0 cs=0 l=1 c=0x7f3528003880).fault

[domino-001.ceph.heliopolis.net][WARNIN] 2014-03-31 23:42:39.122456 7f352fc55700 0 — :/1022380 >> 192.168.22.10:6789/0 pipe(0x7f35280022f0 sd=4 :0 s=1 pgs=0 cs=0 l=1 c=0x7f3528002550).fault

Mario Kothe

31-Mar-2014 at 10:06 pmOk. purgedata and purge. After that clean install this time without a userdefined clustername. Looks like it works now. Up to the monitor part.

james.coyle

1-Apr-2014 at 11:32 amI’m glad you got things going again.

Tawny Barnett

9-May-2014 at 8:28 pmHello James,

I’m using VM’s running ubuntu 12.04 to test this out and I’m getting the following error:

partition-guid=2:e618f906-4715-4a2e-9f22-9529061adb92′, ‘–typecode=2:45b0969e-9b03-4f30-b4c6-b4b80ceff106’, ‘–mbrtogpt’, ‘–‘, ‘/dev/sdb’]’ returned non-zero exit status 4

[ceph01][DEBUG ] ****************************************************************************

[ceph01][DEBUG ] Caution: Found protective or hybrid MBR and corrupt GPT. Using GPT, but disk

[ceph01][DEBUG ] verification and recovery are STRONGLY recommended.

[ceph01][DEBUG ] ****************************************************************************

[ceph01][DEBUG ] GPT data structures destroyed! You may now partition the disk using fdisk or

[ceph01][DEBUG ] other utilities.

[ceph01][DEBUG ] The operation has completed successfully.

[ceph01][DEBUG ] Information: Moved requested sector from 34 to 2048 in

[ceph01][DEBUG ] order to align on 2048-sector boundaries.

[ceph01][ERROR ] RuntimeError: command returned non-zero exit status: 1

[ceph_deploy.osd][ERROR ] Failed to execute command: ceph-disk-prepare –zap-disk –fs-type xfs –cluster ceph — /dev/sdb

[ceph_deploy][ERROR ] GenericError: Failed to create 1 OSDs

Can you please help?

ddenia

19-Oct-2014 at 12:45 pmI installed ceph two nodes, 2 mon 2 osd in xfs, also used the RBD and mount the pool on two different ceph host and when I write data through one of the hosts at the other I do not see the data, what’s wrong?

Sakhi

31-Oct-2014 at 10:35 amRunning command: ceph-deploy mon create ceph2 ceph3 results in the below erro:

[ceph-node3][ERROR ] admin_socket: exception getting command descriptions: [Errno 2] No such file or directory

But mon.ceph1 gets created with no errors. What could I be doing wrong

sjacobs

25-Nov-2014 at 6:59 pmTo Fix: [ceph-node3][ERROR ] admin_socket: exception getting command descriptions: [Errno 2] No such file or directory

Step 1:

You need to modify the ceph.conf file in the directory that you are running your ceph-deploy commands in.

add all your mon names nodes that you will be using in comma delimited format like this

mon_initial_members=hostname1,hostname2,hostname3

mon_host=ipaddr1,ipaddr2,ipaddr3

(note: you can probably put hostnames in for mon_host argument instead of hard coded ip addresses but this worked for me… )

Step 2: then at the bottom of the ceph.conf file add a new argument/param specifying the public mask

public_network = ipaddress/mask (e.g 192.168.0.0/24)

Step 3: save changes to the ceph.conf file

step 4: in the same directory this new modified ceph.conf file is in… run the following command

ceph-deploy –overwrite-conf mon create-initial

Conclusion: this will automatically create mon nodes on the hostnames/ipaddresses you specified and createa quorum amongst them… I hope this helps somebody else… took me hours to figure this out :(

snpz

12-Feb-2015 at 9:13 pmHi!

First of all – thanks for this manual. Really helpfull!

But i have some unanswered questions about this setup:

1) is it possible in Ceph cluster to have OSD’s (/dev/vdb) with different sizes?!

2) what happens in this setup if i just shut down one of the servers and then turn it on again? What will happen to OSD’s and cluster?

Thanks a lot!

Martins

Zabidin

31-Mar-2016 at 3:54 amIf i have mon1, mon2, osd1,osd2,osd4,osd5, can i follow this tutorial? How to setup monitor so it can work properly? What is the step for my step?